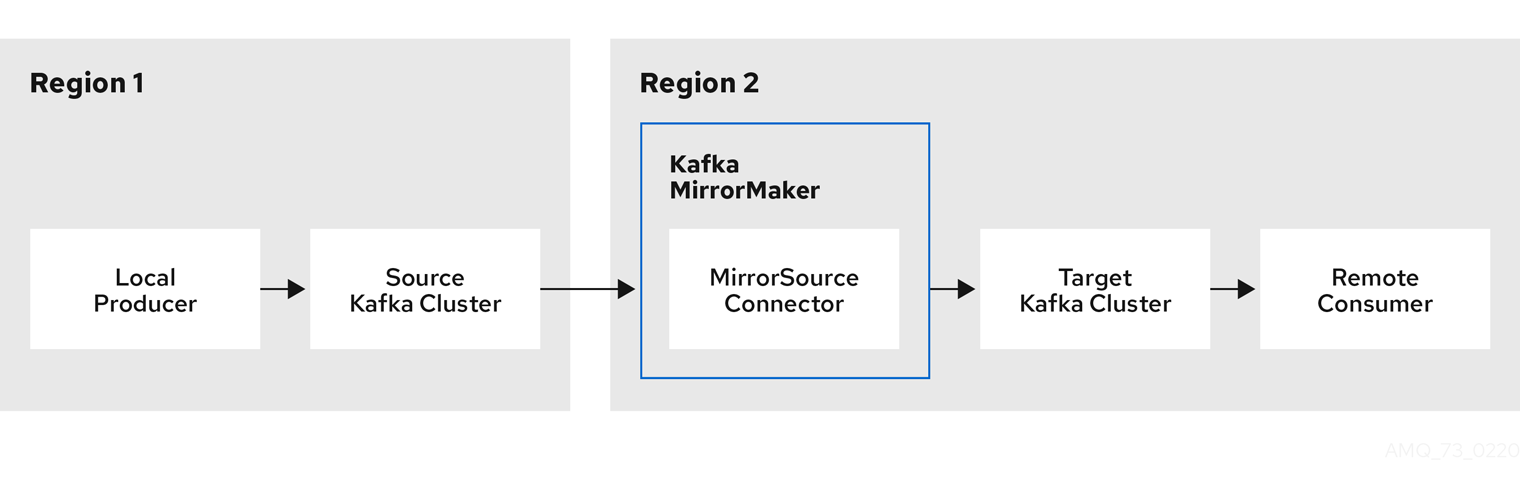

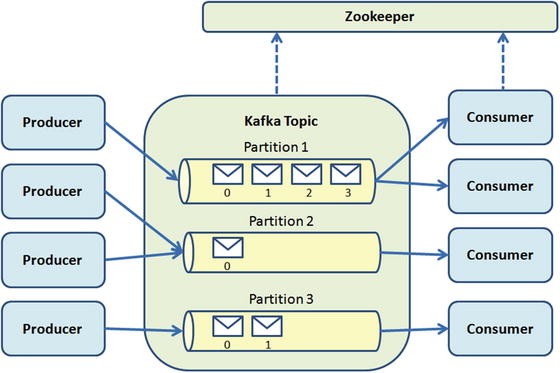

Offline analysis such as Hadoop is being left out of the new economy. Today, yesterday’s information often useless. Due to the high throughput, the analysis could not be done until the next day. Traditionally, the data was handled and stored with traditional aggregation solutions. Simply, a web page needs to know user activity, logins, page visits, clicks, scrolls, comments, heat zone analysis, shares, and so forth. Kafka can handle hundreds of read and write operations per second from a large number of clients.įigure 8-1 shows an Apache Kafka messaging system typical scenario.Īs we have mentioned, data is the new ingredient of Internet-based systems. As we mentioned, all the technologies in the stack are designed to work in commodity hardware. The messages produced are immediately seen by consumer threads these are the basis of the systems called With efficient O(1), so data structures provide constant time performance no matter the data size. Easy integration with different clientsįrom different platforms: Java. So you can grow the cluster horizontally without downtime. Of the messages over the cluster members, maintaining the semantics. Cluster-centric design that supports the distribution Its main characteristics are as follows:ĭistributed. The message broker provides seamless integration, but there are two collateral objectives: the first is to not block the producers and the second is to not let the producers know who the final consumers are.Īpache Kafka is a real-time publish-subscribe solution messaging system: open source, distributed, partitioned, replicated, commit-log based with a publish-subscribe schema.

#Zookeeper heartbeat timeout synctime software

Apache Kafka is a software solution to quickly route real-time information to consumers. The message router is known as message broker. Message publishing is the mechanism for connecting heterogeneous applications through sending messages among them. Now, all traditional applications tend to have a point of integration between them, therefore, creating the need for a mechanism for seamless integration between data consumers and data producers to avoid any kind of application rewriting at either end.Īs we mentioned in earlier chapters, in the big data era, the first challenge was the data collection and the second challenge was to analyze that huge amount of data. In the 1980s, 1990s and 2000s, the large software vendors whose names have three letters (IBM, SAP, BEA, etc.) and more (Oracle, Microsoft, Google) have found a very well-paid market in the integration layer, the layer where live: enterprise service bus, SOA architectures, integrators, and other panaceas that cost several millions of dollars. Most of the time the information generators and the information consumers are inaccessible to each other this is when integration tools enter the scene. This data needs easy ways to be delivered to multiple types of receivers. Nowadays, real-time information is continuously generated.

The Apache Kafka author, Jay Kreps, who took a lot of literature courses in the college, if the project is mainly optimized for writing (in this book when we say “optimized” we mean 2 million writes per second on three commodity machines) when he open sourced it, he thought it should have a cool name: Kafka, in honor of Franz Kafka, who was a very prolific author despite dying at 40 age.